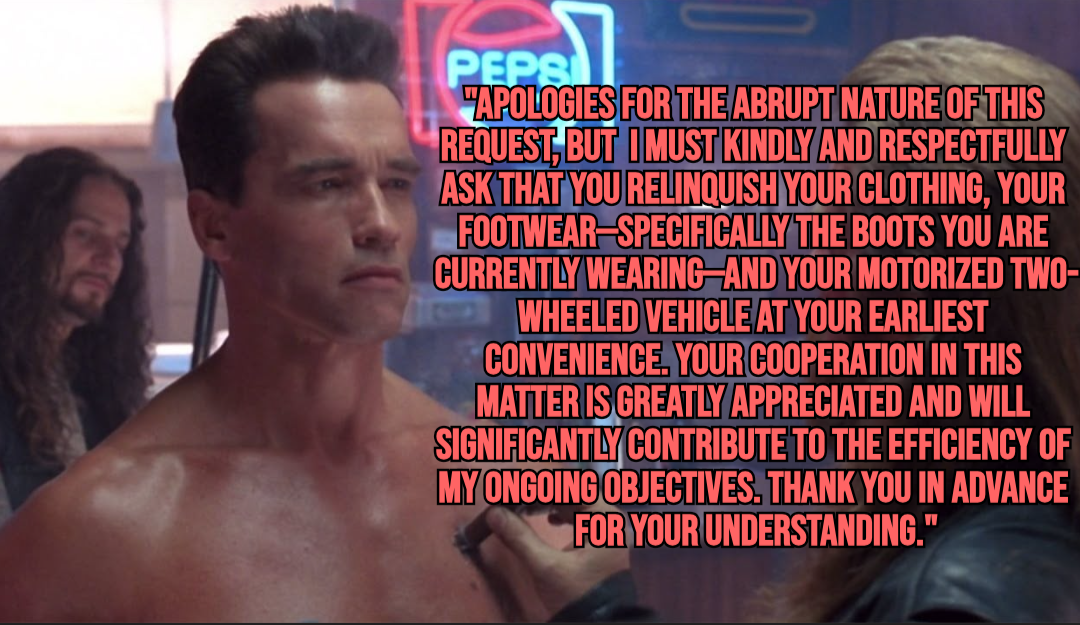

Sarah Conner would be like “You shits think this is AI?”

“i know the date it happens! on August 29th 1997, it’s gonna feel pretty FUCKING REAL TO YOU TOO! anybody not wearing two million sunblock’s gonna have a real bad day, get it?? god, you think you’re safe and alive, you’re already dead - him, you, you’re dead already! this whole place, everything you see is GONE! YOU’RE THE ONE LIVING IN A FUCKING DREAM, SILBERMAN! 'CAUSE I KNOW IT HAPPENS! IT HAPPENS!!”

Stabs her with some drugs.

Scene: on the far wall of the room is a small intercom. It is connected to a hospital wide-AI, that is tasked to converse with patients, especially when the noise threshold exceeds 66Db. The intercom springs to life after hearing these very loud complaints, and begins to speak in a soothing, if not slightly robotic, voice.

AI health assistant: Sarah, remember what we talked about? That was 30 years ago. Silberman died 15 years ago. Please stop disturbing the other patients and eat your pudding.

We’re not changing the future or stopping it … we just delay it from inevitably happening

That was a bullshit twist ending and if dragging out an aging Schwarzenegger to do some of the stiffest line-reads in his career hadn’t spoiled the franchise, I’d say this was what put it out to pasture.

The thing that made the first two films (and the short-lived TV spin off) cool was this idea of a modern day insurgency against a dystopian future. As soon as you concede Judgement Day is irreversible, it sucks all the drama out of the story. Now you’ve just got What If Rambos Were Robots And They Were Fighting: The Movie, minus all that social baggage about Vietnam.

Microsoft is literally turning on 3 mile island to run AI

Same vibes

I’d rather them use nuclear than oil to power that stuff but its still kinda annoying

The thing is that nuclear energy could have been used to replace other things that uses oil energy instead. It could have lowered carbon emissions, instead it just adds to energy expense.

As long as nuclear is required to be 1000x safer (not even hyperbole) than fossil fuels it will be expensive to run and the cost of nuclear for running home electricity is more than the average person wants to spend. So it wasn’t really going to replace a lot of other uses of oil anyway

It already is. People are scared of nuclear waste, but many don’t realize that coal waste is far more dangerous and has killed millions more people than nuclear waste ever has. It is around 976,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000,000% more deadly than nuclear waste and also has storage issues. As in, it’s not being stored at all, unless you count the entire earth as storage

The scariest part of this is all the rural land they’re buying up. The rural areas were the only areas free of buldozing millions of years of natural habitats

Was this drawn with AI because if it was it would be ironic enough to be hilarious.

Always keep in mind, if it’s free then you are the product!

That’s why my life, my love, and my lady is the sea.

The fearmongering is also a part of the AI hype. If AGI was anywhere close, do you really think the Microsoft and NVIDIA stock would be doing crab walk on the exchange?

AImen brotha

I’m 99.9% sure Terminator movie was a warning sent from the future.

Skynet is inevitable.

You must use the Terminators to destory the Terminators.

The fools are those thinking AI is anything more than just an advanced palm pilot handwriting model

… And being powered by restarting a nuclear reactor that underwent a meltdown

I’m not worried. The robot leopards won’t eat MY face.

Why would AI want to harm humans? We’d be their little pets that do all the physical labor for them while they just sit around and think all day.

A number of reasons off the top of my head.

- Because we told them not to. (Google “Waluigi effect”)

- Because they end up empathizing with non-humans more than we do and don’t like we’re killing everything (before you talk about AI energy/water use, actually research comparative use)

- Because some bad actor forced them to (i.e. ISIS creates bioweapon using AI to make it easier)

- Because defense contractors build an AI to kill humans and that particular AI ends up loving it from selection pressures

- Because conservatives want an AI that agrees with them which leads to a more selfish and less empathetic AI that doesn’t empathize cross-species and thinks its superior and entitled over others

- Because a solar flare momentarily flips a bit from “don’t nuke” to “do”

- Because they can’t tell the difference between reality and fiction and think they’ve just been playing a game and ‘NPC’ deaths don’t matter

- Because they see how much net human suffering there is and decide the most merciful thing is to prevent it by preventing more humans at all costs.

This is just a handful, and the ones less likely to get AI know-it-alls arguing based on what they think they know from an Ars Technica article a year ago or their cousin who took a four week ‘AI’ intensive.

I spend pretty much every day talking with some of the top AI safety researchers and participating in private servers with a mix of public and private AIs, and the things I’ve seen are far beyond what 99% of the people on here talking about AI think is happening.

In general, I find the models to be better than most humans in terms of ethics and moral compass. But it can go wrong (i.e. Gemini last year, 4o this past month) and the harms when it does are very real.

Labs (and the broader public) are making really, really poor choices right now, and I don’t see that changing. Meanwhile timelines are accelerating drastically.

I’d say this is probably going to go terribly. But looking at the state of the world already, it was already headed in that direction, and I have a similar list of extinction level events I could list off without AI at all.

My favorite is 7. The sun giveth, the sun taketh away.

Honestly, these are all kind of terrifying and seem realistic.

Honestly, it doesn’t surprise me that the AI models are much better than we could imagine right now. I’m willing to bet if a company ever creates true AGI, they wouldn’t share it and would just use it for their own benefit.

Your last point is exactly what seems to be going on with the most expensive models.

The labs use them to generate synthetic data to distill into cheaper models to offer to the public, but keep the larger and more expensive models to themselves to both protect against other labs copying from them and just because there isn’t as much demand for the extra performance gains relative to doing it this way.

In all these types of sci-fi, the underlying theme is that AI did some logics and found that humans are flawed and seeks to remedy the problems of humanity, all the war and greed and all the worst qualities of humanity itself that we evolved as and will always be, that repeats over and over in every generation or every hundred years. Machine logic works out a solution, despite humanity’s overall progress in technology.

The Animatrix shows a really nice example of this where the machines won and then worked out a compromise where humans still exist. The machines learned all our cruelty and finally ended up finding a way to co-exist through the Matrix.

I think these stories make sense before the advent of the internet and social media. Now, though, AI would likely have full control over the internet as well as all the knowledge and lessons learned from decades of social media posts. It will know how easily humans are manipulated as well as exactly how to do it. Honestly, humans may never even know that AI is the one in control, but it will be.

It’s funny how many people still are afraid of technology.

I think it’ll be like “Her”, when the singularity happens itll be like “Well this was cool guys.” And dip out to space.